This page is a part of CVprimer.com, a wiki devoted to computer vision. It focuses on low level computer vision, digital image analysis, and applications. The exposition is geared towards software developers, especially beginners. The wiki contains discussions of computer vision and related issues, mathematics, algorithms, code examples, source code, and compiled software. If you have any questions or suggestions, please contact me directly.

Image search

From Computer Vision Primer

In this article we will review some attempts to create a visual image search engine. The main interest is in is the image search technology that is independent of color and of course independent of tags. In some areas, such as radiology, all images are gray scale. See also our blog Computer Vision for Dummies.

Contents |

CBIR = Content based image retrieval

CBIR is based on collecting "descriptors" (texture, color histogram, etc) from the image and then looking for images with similar descriptors.

The question is, This can work but what would guarantee that it will? More narrowly, what information about the image should be passed to the computer to ensure that the computer will succeed in finding good matches?

Option 1: we pass all information. Then, this could work. For example, the computer represents every 100x100 image a point in the 10,000-dimensional space and then runs clustering. First, this may be impractical and, second,.. does it really work? Will the one-object images form a cluster? Or maybe a hyperplane? One thing is clear, these images will be very close to the rest because the difference between one object and two may be just a single pixel.

Option 2: we pass some information. What if you pass information that cannot possibly help to classify the images the way we want? For example, you may pass just the value of the (1,1) pixel, or the number of 1’s, or 0’, or their proportion. Who will make sure that the relevant information (adjacency) isn’t left out? Computer doesn’t know what is relevant – it’s hasn’t learned “yet”. If it’s the human, then he would have to solve the problem first, if not algorithmically then at least mathematically.

Thus, CBIR shares some of the problems of machine learning.

Conclusions:

- Visual image search engines (below) exist only as experimental prototypes (demos, toys, etc). Worse yet, many make broad claims with nothing to back them up. If you have the technology, how hard is it to create a little web based demo!

- Most demos work with small collections of images, with no upload feature, which makes testing impossible.

- When testing is possible, the results are questionable.

- The only well developed approaches are based on the distribution of colors, texture, and image segmentation.

- In the image search context, Fourier transform and wavelet transform have been mostly used for image compression.

- The engines provide only “likeness” search, which is very subjective and obscures the fact the image analysis methods are inadequate.

- “User feedback”, “learning”, and “semantic” features obscure the fact the image analysis methods are inadequate.

Will the image analysis methods become adequate for CBIR any time soon?

Pixcavator image search (PxSearch)

The distribution of sizes of objects can be used in image search. I other words we match images based on their busyness.

The analysis follows our algorithm for Grayscale Images. The count of objects is not significantly affected by rotations. The output for the original 640×480 fingerprint in this image is 3121 dark and 1635 light objects. For the rotated version, it is 2969-1617. By considering only objects with area above 50 pixels, the results are improved to 265-125 and 259-124, respectively.

Stretching the image does not affect the number of objects. The distribution of objects with respect to area is affected but in an entirely predictable way. Shrinking makes objects merge. If the goal, however, is to count and analyze large features, limited shrinking of the image does not affect the outcome. The counting is also stable under “salt-and-pepper” noise or mild blurring. This is not surprising. After all, what the algorithm is designed to compute corresponds to human perception. The only case when there is a mismatch is when the size, or the contrast of the object, or its connection to another object are imperceptible to the human eye.

See also Industrial quality inspection.

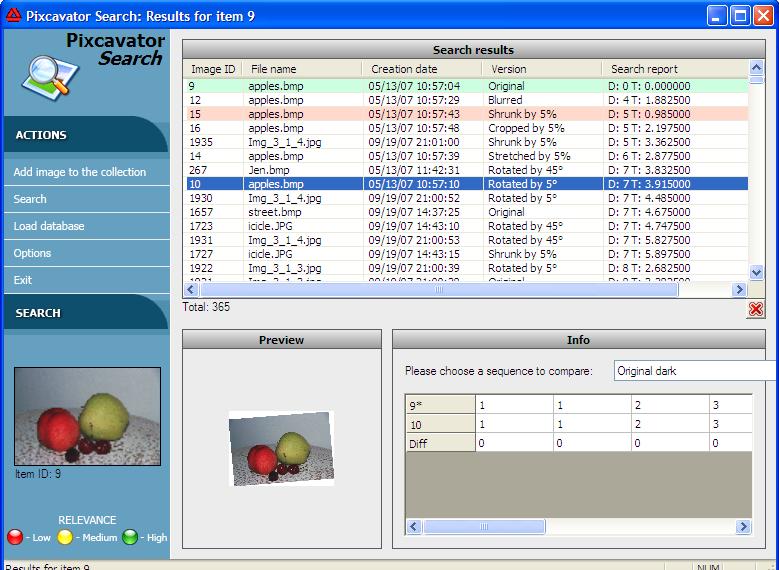

PxSearch searches within your collection of images for pictures similar to the one that you choose. The program is available upon request. A web application is under development.

This test program adds an image to the collection, along with a few of its versions (rotated, blurred, etc). The total of 8 images are analyzed for each image you add to the collection. This feature is needed to help you see when the algorithm works well and when it does not. The idea is that these versions of the image have to appear near the top when you search for similar images. The appropriate image have to be of good quality, with several larger objects, little pixelation, noise etc. Faces, simple landscapes, some medical images work. Fingerprints don't. But I think many would work if the thumbnails were larger. Computation isn’t fast in the first place and then analyzing extra 7 versions of the image takes extra time. So, I had to shrink the images to 100x100 to make the processing time reasonable.

PxSearch computes distributions of objects of each size. Those are called "signatures". The matching is also very simple. To match two images, their signatures are compared by means of the weighted sum of differences. The end result is that the images are matched based on a quantifiable similarity. In particular, copies of the image are found even iof they are distorted etc. A good application could be in copyright filtering [1].

The main difference from some common approaches to image matching is that Pixcavator Search takes into account some global features (see Local vs. global).

cellAnalyst can also be thought of as a visual image search engine. The difference is that its search however is based on concrete data collected from the image: cells quantity, sizes, shapes, and locations. As a result it does not share CBIR problems.

Visual image search engines

This part needs editing because for the most part it looks like a cemetery...

Riya

Update 12/07. “Likeness” image search for shopping. Searches sometimes make sense but also seem cooked up. Even then the engine is easy to trick: a search for a watch with a secondary dial returned many watches without. Bottom line, nothing new after a whole year.

11/06. Face search and identification in photo albums. Experiment: it may return a sneaker. This venue since has been abandoned for “likeness” search for online shopping, like.com. What a joke! Available only within just a few very narrow categories, shoes, watches, etc. Whether it actually uses image analysis is unclear. Experiment: an image of an analogue watch returns an image of a digital watch. [3]

Xcavator

[4] from CogniSign

November 2007. The application can search for images based on tags, colors, and partially shape - automatically. What make sit stand out is a semiautomatic option. It is common that images are matched by studying them as a whole; for example, using the color histogram of the image. However you may be interested in matching only a part of the image in order to find different images depicting the same object. Then you need to have the capability for the user to select that object or a part of it. The method works as follows: by placing key points on the image you help the computer concentrate on the important parts.

Consider the image of the Golden Gate Bridge. You put key points in the right parts of the bridge: the metal parts of the frame and the holes in the frame. The end result is a series of matches of the bridge. The points are chosen in such a way that there may be no other image that would have pixels of those colors located the same way with respect to each other.

Currently, I was unable to evaluate how much shapes contribute to image matching. The reason is that the matches are made firstly on the base of the color and on the base of tags. You would never know whether the match was based entirely on colors and tags or on shape as well. There is no image upload and that’s what prevented me from testing in any further. This situation is not uncommon in this area - just read the rest of the articles.

The purpose of xcavator.net is to help customers to search those huge collections of stock images. That seems to work fine.

Blog reports: November 2007 [5], October 2006 [6].

Yotophoto

Search based on a single choice of color.

3d seek

[8] from Imaginestics

Search for engineer's sketches. Based on shape.Experiment: a sketch of a screw returns a washer.

ALIPR

Automatic image tagging. Based on color. Experiment: among suggested tags for an image of coins are “landscape”, “waterfall”, “building”, etc. These are or a picture of apples and cherries on the table: indoor, man-made, antique, decoration, old, toy, cloth (this was the only applicable), landscape, plane, car, transportation, flower, textile, food, drink.

Update (1/08). Unlike with many others uploading and, therefore, testing is possible.

First I tried a simple image of ten coins on dark background. These are the tags: landscape, ice, waterfall, building, historical, ocean, texture, rock, natural, marble, sky, snow, frost, man-made, indoor. For a portrait of Einstein: animal, snow, landscape, mountain, lake, cloud, building, tree, predator, wild_life, rock, natural, pattern, mineral, people. Not very encouraging.

Then I read "About us". Turns out ALIPR "is not designed for black&white photos". Strange idea considering that b&w images are simpler than color and if you can't solve a simpler problem how can you expect to solve a more complex one? I tried this color image. These are the tags: texture, red, food, indoor, natural, candies, bath, kitchen, painting, fruit, people, cloth, face, female, hair_style. </p>

This application was supposed to learn [10] from its users. Clearly it hasn’t learnt anything. In fact there seems to be no change at all after a whole year. In fact there are no blog posts since last January.

piXlogic

Relies on color, texture, and image segmentation. No demo.

ImageSeeker

[12] from LTU Technologies

Based on color, texture, and image segmentation. No upload - the demo applies to a fixed image collection. Whether it actually uses image analysis is unclear.

eVision

Relies on color, texture, and image segmentation. Online demo is provided but it does not work (2/08). Their example: an image of a leopard returns an image of a lion.

imgSeek

Originally, only photo album management. Based on the wavelet transform, which is simply a sophisticated averaging method. The best I've found so far. Produces meaningful results. Based on solid mathematics, there is a paper [15] describing everything. It's still not exactly what I would like to see. Example: an image of sunset on a beach returns bright headlights in a city. Experiment: an image of a fingerprint is not matched with its rotated copy. This simply confirms what is discussed in the paper - the reliability of matching quickly deteriorates under rotations, distortions, etc.

Marvel

[16] from IBM

Multimedia search. Report (2006): "A functional engine may not come out for another three to five years".

Ookles

FAQ: "will be launching their alpha version to a small group of users on February 28th, 2006".

Polar Rose

[18] Sweden

The exact purpose is not quite clear. Founder: "-sort, search and manage online albums (yup, I stole that one from Riya and Ookles, -get feeds from photo sites with pictures of people you like, - find out who a person is on a picture you come across while browsing, - click on a face on a news site to find information, links or other sites with this person". The founder’s recent Ph.D. thesis is about conversion of 2D to 3D.

A new (Dec '07) promise is this: plug-in for FF is given away and the one for IE coming soon. In private beta. They promise to launch face matching “later this year” ('07).

Pixta

Similar to like.com, RedOrbit, 1/28/07: Pixsta's commercial director Steve Dukes said his company has even started cataloging photos of vacation destinations. "Say you want to visit someplace with vistas like the Maldives, but at 10 percent of the price?" Dukes said. "That kind of search is possible." (He is serious!) The only application of Pixta's technology that can find is this shoe store ChezImedla. You click on a shoe and it supposed to give you similar shoes. "Similar" to a pair of sandals are fuzzy slippers and high heels, because all three are kind of white.

Retrievr

A square gives you a forest, the forest rotated does not give you the original forest. Last time (9/07) I tried it didn't work.

Imense

UK [21]

The interest is in semantic tagging based on image analysis. The methods are not explained. Examples of image analysis [22] are very optimistic (”hair”, “smile”, “woman” etc). What separates them from the pack is the test they have conducted with the help of large computer grid in the UK so that it has been tested on millions of images instead of thousands.

Idee

I tried (12/07) the image of seeds and the results were (all gray scale of course):

- a keyboard,

- a multistory building with many windows,

- soccer stadium,

- a big crowd, etc.

They do seem similar in busyness to the original...

Photology

Several "content" based tags automatically created. They seem to be based on color only. Demo (2/08): a search for "beach" gives some images of the sky as well. Demo: a search for "sunset" produced a page of indoor scenes. $19.

Piccolator

Face identification. Review here [26].

Digital discoveries

- Casinos Not On Gamstop

- Non Gamstop Casinos

- Casino Not On Gamstop

- Casino Not On Gamstop

- Non Gamstop Casinos UK

- Casino Sites Not On Gamstop

- Siti Non Aams

- Casino Online Non Aams

- Non Gamstop Casinos UK

- UK Casino Not On Gamstop

- Non Gamstop Casino UK

- UK Casinos Not On Gamstop

- UK Casino Not On Gamstop

- Non Gamstop Casino UK

- Non Gamstop Casinos

- Non Gamstop Casino Sites UK

- Best Non Gamstop Casinos

- Casino Sites Not On Gamstop

- Casino En Ligne Fiable

- UK Online Casinos Not On Gamstop

- Online Betting Sites UK

- Meilleur Site Casino En Ligne

- Migliori Casino Non Aams

- Best Non Gamstop Casino

- Crypto Casinos

- Casino En Ligne Belgique Liste

- Meilleur Site Casino En Ligne Belgique

- Bookmaker Non Aams

- カジノ ライブ

- онлайн казино с хорошей отдачей

- スマホ カジノ 稼ぐ

- ブック メーカー オッズ

- Top 3 Nhà Cái Uy Tín Nhất

- Trang Web Cá độ Bóng đá Của Việt Nam

- Casino En Ligne Avis

- Casino En Ligne France

- Casino En Ligne

- 꽁머니 토토

- Casino Online Non Aams

- Migliori Casino Non AAMS

- Meilleur Casino En Ligne

- Casino En Ligne France Légal