Computer Vision & Math contains: mathematics courses, covers: image analysis and data analysis, provides: image analysis software. Created and run by Peter Saveliev.

Visual image search engines

From Computer Vision and Math

This article needs editing because for the most part it looks like a cemetery...

For a more in-depth discussion, see Image-to-image search (aka Similarity Image Search).

3d seek

[1] from Imaginestics

Search for engineer's sketches. Based on shape.Experiment: a sketch of a screw returns a washer.

ALIPR

Automatic image tagging. Based on color. Experiment: among suggested tags for an image of coins are “landscape”, “waterfall”, “building”, etc. These are or a picture of apples and cherries on the table: indoor, man-made, antique, decoration, old, toy, cloth (this was the only applicable), landscape, plane, car, transportation, flower, textile, food, drink.

Update (10/08). New report [3] - nothing new.

Update (1/08). Unlike with many others uploading and, therefore, testing is possible.

First I tried a simple image of ten coins on dark background. These are the tags: landscape, ice, waterfall, building, historical, ocean, texture, rock, natural, marble, sky, snow, frost, man-made, indoor. For a portrait of Einstein: animal, snow, landscape, mountain, lake, cloud, building, tree, predator, wild_life, rock, natural, pattern, mineral, people. Not very encouraging.

Then I read "About us". Turns out ALIPR "is not designed for black&white photos". Strange idea considering that b&w images are simpler than color and if you can't solve a simpler problem how can you expect to solve a more complex one? I tried this color image. These are the tags: texture, red, food, indoor, natural, candies, bath, kitchen, painting, fruit, people, cloth, face, female, hair_style.

This application was supposed to learn [4] from its users. Clearly it hasn’t learned anything. In fact there seems to be no change at all after a whole year. In fact there are no blog posts since last January.

eVision

Relies on color, texture, and image segmentation. Online demo is provided but it does not work (2/08). Their example: an image of a leopard returns an image of a lion.

Gazopa

[6] (what an awful name!) from Hitachi.

Gazopa is a new visual search engine that is “a venture project inside Hitachi”.

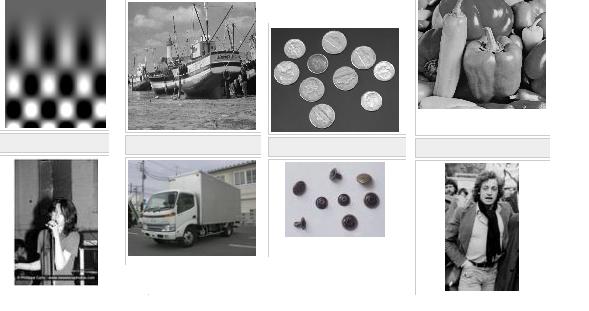

I tried its Facebook application (01/09). I uploaded a few standard images and a few test images of my own and ran Gazopa. Some of the matches were awful while others were sort of meaningful. See for yourselves. The first match is displayed under the target image.

Gazopa also found a cropped copy of the “cameraman”, but not a rotated copy. The inability to handle rotations is a common problem with almost all visual search engines.

As far as the underlying technology, the site says that “GazoPa enables users to search for a similar image from characteristics such as a color or a shape extracted from an image itself” and nothing more. So, even what they consider similar is unknown.

Google image search

The paper isn’t about improving image search in general (especially visual image search and CBIR. It is specifically about Google image search (and indirectly other search engines, MSN, Yahoo, etc). The goal is to improve it. It is currently based on surrounding text and as a result you get a lot of irrelevant images. Essentially, they add to this approach some image analysis. What kind? Not the best kind – “descriptors”. So there will be no analysis of the content of the image (see Fields related to computer vision). Even so, the descriptors will help to evaluate similarity between images - to a certain degree.

To summarize, some similarity measure plus hyperlinks - that will help with improving the search results for sure. Meanwhile, image search, image recognition etc remain unsolved.

It is unclear if the recent similarity search is based on this. Reviews are here and here. The verdict: not so good.

Microsoft released its own similarity search a few months before. It would be interesting to test and see which one is better (or not as bad). One point in favor of Microsoft is that Google didn’t index all images.

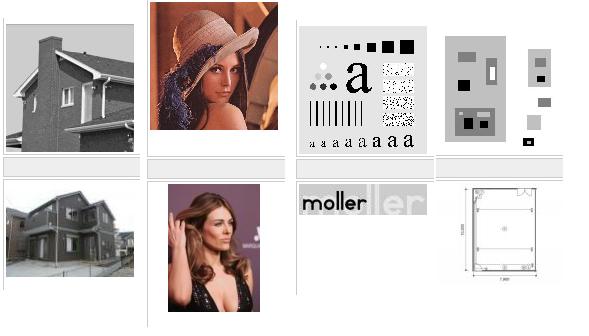

Update (6/11): New version is released. The screenshot speaks for itself:

Idee

Update (5/08):

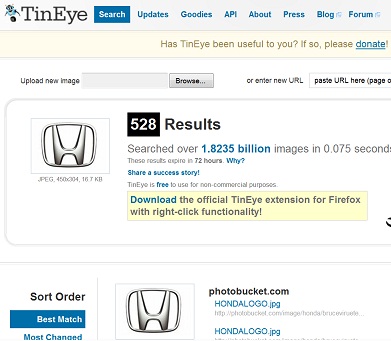

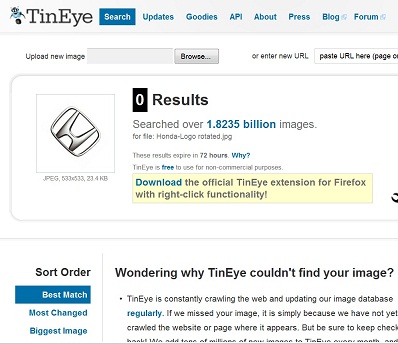

They don’t make wild claims about being able to do face identification or similar (unsolved) problems. The goal seems very straightforward: find copies of images. With this task TinEye does a good job. It finds even ones that have been modified - noise, color, stretch, crop, some photoshopping. It does not do well with rotation. That's a major drawback (compare to Lincoln from MS Research) if you are interested in science applications.

They call it "reverse image search" which does not make sense to me. The "direct" image search as we know it is: given keyword find images. The "reverse" then should be: given image find keywords, i.e. categories. That's not what is happening here.

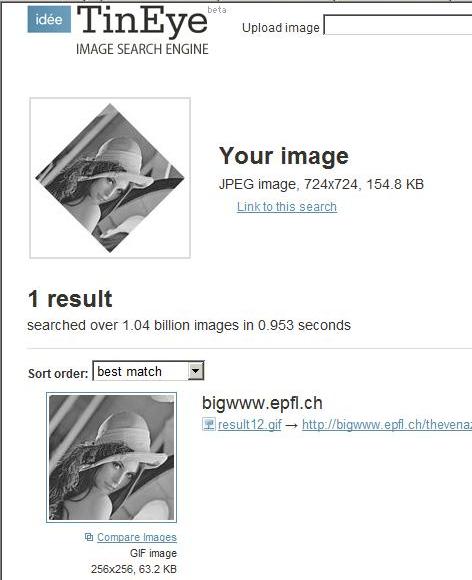

These are the images that I tried.

Barbara: found both color and bw copies and a slightly cropped version.

Marilyn: found cropped and stretched versions, and an even edited (defaced) version.

Lenna: found both color and bw, but not partial or rotated versions (even though a rotated version is in the index).

Tried again (3/09) - same problem with rotation:

Still (1/11) can't handle rotated versions:

ImageSeeker

[9] from LTU Technologies

Based on color, texture, and image segmentation. No upload - the demo applies to a fixed image collection. Whether it actually uses image analysis is unclear.

Imense

UK [10]

The interest is in semantic tagging based on image analysis. The methods are not explained. Examples of image analysis [11] are very optimistic (”hair”, “smile”, “woman” etc). What separates them from the pack is the test they have conducted with the help of large computer grid in the UK so that it has been tested on millions of images instead of thousands.

imgSeek

Originally, only photo album management. Based on the wavelet transform, which is simply a sophisticated averaging method. The best I've found so far. Produces meaningful results. Based on solid mathematics, there is a paper [13] describing everything. It's still not exactly what I would like to see. Example: an image of sunset on a beach returns bright headlights in a city. Experiment: an image of a fingerprint is not matched with its rotated copy. This simply confirms what is discussed in the paper - the reliability of matching quickly deteriorates under rotations, distortions, etc.

Imprezzeo

[14]. “Coming soon”.

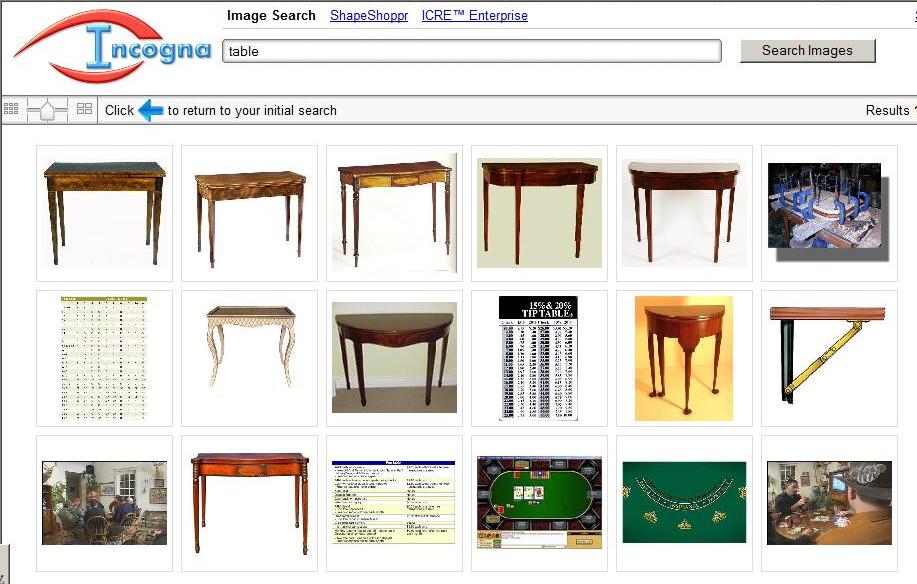

Incogna

The screenshot tells the whole story. The image of a table in the upper left corner is the query image. The rest are supposed to be “similar”. What is the image filled with numbers doing here you ask? Hmm… Oh yes, it’s a table of numbers!

Lincoln from MS Research

I tested it (2007) and the search seems stable under rotation, cropping, noise and loss of color.

Marvel

[16] from IBM

Multimedia search. Report (2006): "A functional engine may not come out for another three to five years".

Milabra

Milabra claims that it can categorize images, “from puppies to porn”:

…when searching through a library of images for dogs, Milabra doesn’t need to constantly compare each image with its database of known ‘dog’ images - instead, it can look for traits that it has learned to associate with “doggyness”…

The two examples in the demo are “beach” and “dog”. You upload an image with people on the beach, click “Search” and you get a page of beach photos... Wait, you don’t get to upload anything – this is just a video! So, there is no way to test their claims. Unfortunately, this is not unusual in this area and in computer vision in general.

If your software can recognize a puppy in an image (95% of the time as you claim), it should be easy for you to demonstrate this ability. Create a little web application (or desktop, I don’t care) that allows me to upload my own image which is then identified as “puppy” (or “tree”, or “street”, I don't care). There is no such program. Why not? The answer is obvious.

Ookles

FAQ: "will be launching their alpha version to a small group of users on February 28th, 2006".

Photology

Several "content" based tags automatically created. They seem to be based on color only. Demo (2/08): a search for "beach" gives some images of the sky as well. Demo: a search for "sunset" produced a page of indoor scenes. $19.

Picasa

[19] launched a face recognition feature. By most accounts it does not work well.

Picollator

Face identification. Review here [21] and discussion here [22].

piXlogic

Relies on color, texture, and image segmentation. No demo.

Pixsta

Update (5/08): Launched an image search engine [25], again? Testing is closed...

RedOrbit, 1/28/07: Pixsta's commercial director Steve Dukes said his company has even started cataloging photos of vacation destinations. "Say you want to visit someplace with vistas like the Maldives, but at 10 percent of the price?" Dukes said. "That kind of search is possible."

The only application of Pixsta's technology that I can find is this shoe store ChezImedla. You click on a shoe and it supposed to give you similar shoes. "Similar" to a pair of sandals are fuzzy slippers and high heels, because all three are kind of white.

Polar Rose

[26] Sweden

The exact purpose is not quite clear. Founder: "-sort, search and manage online albums (yup, I stole that one from Riya and Ookles, -get feeds from photo sites with pictures of people you like, - find out who a person is on a picture you come across while browsing, - click on a face on a news site to find information, links or other sites with this person". The founder’s recent Ph.D. thesis is about conversion of 2D to 3D.

A new (Dec '07) promise is this: plug-in for FF is given away and the one for IE coming soon. In private beta. They promise to launch face matching “later this year” ('07).

Retrievr

Based on the same research as imgSeek. A square gives you a forest, the forest rotated does not give you the original forest. Last time (9/07) I tried it didn't work.

Riya

Update 12/07. “Likeness” image search for shopping. Searches sometimes make sense but also seem cooked up. Even then the engine is easy to trick: a search for a watch with a secondary dial returned many watches without. Bottom line, nothing new after a whole year.

11/06. Face search and identification in photo albums. Experiment: it may return a sneaker. This venue since has been abandoned for “likeness” search for online shopping, like.com. What a joke! Available only within just a few very narrow categories, shoes, watches, etc. Whether it actually uses image analysis is unclear. Experiment: an image of an analogue watch returns an image of a digital watch. [29]

VideoSurf

[30] “Unveils First Computer Vision Search for Video”. Private beta.

Xcavator

[31] from CogniSign

November 2007. The application can search for images based on tags, colors, and partially shape - automatically.

What make it stand out is a semiautomatic option. It is common that images are matched by studying them as a whole; for example, using the color histogram of the image. However you may be interested in matching only a part of the image in order to find different images depicting the same object. Then you need to have the capability for the user to select that object or a part of it. The method works as follows: by placing key points on the image you help the computer concentrate on the important parts.

Consider the image of the Golden Gate Bridge. You put key points in the right parts of the bridge: the metal parts of the frame and the holes in the frame. The end result is a series of matches of the bridge. The points are chosen in such a way that there may be no other image that would have pixels of those colors located the same way with respect to each other.

Currently, I was unable to evaluate how much shapes contribute to image matching. The reason is that the matches are made firstly based on the color and tags. You would never know whether the match was based entirely on colors and tags, or on the shapes as well. There is no image upload and that’s what prevented me from testing it any further. This situation is not uncommon in this area - just read the rest of the articles here.

The purpose of xcavator.net is to help customers to search those huge collections of stock images. That seems to work fine.

Blog reports: November 2007 [32], October 2006 [33].

Yotophoto

Search based on a single choice of color.

Digital discoveries

- Casinos Not On Gamstop

- Non Gamstop Casinos

- Casino Not On Gamstop

- Casino Not On Gamstop

- Non Gamstop Casinos UK

- Casino Sites Not On Gamstop

- Siti Non Aams

- Casino Online Non Aams

- Non Gamstop Casinos UK

- UK Casino Not On Gamstop

- Non Gamstop Casino UK

- UK Casinos Not On Gamstop

- UK Casino Not On Gamstop

- Non Gamstop Casino UK

- Non Gamstop Casinos

- Non Gamstop Casino Sites UK

- Best Non Gamstop Casinos

- Casino Sites Not On Gamstop

- Casino En Ligne Fiable

- UK Online Casinos Not On Gamstop

- Online Betting Sites UK

- Meilleur Site Casino En Ligne

- Migliori Casino Non Aams

- Best Non Gamstop Casino

- Crypto Casinos

- Casino En Ligne Belgique Liste

- Meilleur Site Casino En Ligne Belgique

- Bookmaker Non Aams

- カジノ ライブ

- онлайн казино с хорошей отдачей

- スマホ カジノ 稼ぐ

- ブック メーカー オッズ

- Top 3 Nhà Cái Uy Tín Nhất

- Trang Web Cá độ Bóng đá Của Việt Nam

- Casino En Ligne Avis

- Casino En Ligne France

- Casino En Ligne

- 꽁머니 토토

- Casino Online Non Aams

- Migliori Casino Non AAMS

- Meilleur Casino En Ligne

- Casino En Ligne France Légal

- Casino En Ligne France Légal