This site is devoted to mathematics and its applications. Created and run by Peter Saveliev.

Pixel

From Intelligent Perception

Multiple meanings...

- A location within the image: two coordinates.

- A location and its value: 0 or 1 for binary, 0-255 for gray scale, 3 numbers for color.

- A little square/tile (see Cell decomposition of images).

- A unit of length.

- A unit of area.

In 3D, it's a "voxel".

One main thing to keep in mind while analyzing images is this simple principle:

This is important in two ways.

First, as the resolution increases the analysis results should "converge" to the analysis results of the real scene depicted in the image. Because the world is analog (another good principle).

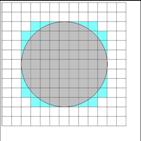

Whatever the "real" (or physical) object is depicted in the image, its area computed as the sum of pixels will be as close as we like to its "true" area as the resolution increases (for more rigorous interpretation [1]). See the pictures below.

This is not that simple with the length. Indeed, increasing the resolution will not reduce the relative error of the measurement. See Lengths of curves.

Second, we need to analyze image in such a way that a single pixel variation of the image would be negligible. In fact, a single round of erosion or dilation, i.e. adding or removing a layer of pixels from the border of an object, will not dramatically change the area or perimeter of an object. Why? Because pixels are small.

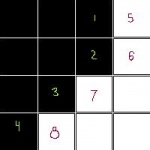

This works fine for geometric measurements (see also Robustness of geometry) if the topology does not change. It's not so easy for topology. The example on the right shows that adding the red pixel merges three objects and also creates a hole (white object).

The we can say that these topological features aren't robust. In fact the robustness can be measured in term of how many dilations and erosions it takes to change the topology. For example,

- how many erosions does it take to split an object into two or more?

- how many dilations does it take to create a hole in an object?

Digital discoveries

- Casinos Not On Gamstop

- Non Gamstop Casinos

- Casino Not On Gamstop

- Casino Not On Gamstop

- Non Gamstop Casinos UK

- Casino Sites Not On Gamstop

- Siti Non Aams

- Casino Online Non Aams

- Non Gamstop Casinos UK

- UK Casino Not On Gamstop

- Non Gamstop Casino UK

- UK Casinos Not On Gamstop

- UK Casino Not On Gamstop

- Non Gamstop Casino UK

- Non Gamstop Casinos

- Non Gamstop Casino Sites UK

- Best Non Gamstop Casinos

- Casino Sites Not On Gamstop

- Casino En Ligne Fiable

- UK Online Casinos Not On Gamstop

- Online Betting Sites UK

- Meilleur Site Casino En Ligne

- Migliori Casino Non Aams

- Best Non Gamstop Casino

- Crypto Casinos

- Casino En Ligne Belgique Liste

- Meilleur Site Casino En Ligne Belgique

- Bookmaker Non Aams

- カジノ ライブ

- онлайн казино с хорошей отдачей

- スマホ カジノ 稼ぐ

- ブック メーカー オッズ

- Top 3 Nhà Cái Uy Tín Nhất

- Trang Web Cá độ Bóng đá Của Việt Nam

- Casino En Ligne Avis

- Casino En Ligne France

- Casino En Ligne

- 꽁머니 토토

- Casino Online Non Aams

- Migliori Casino Non AAMS

- Meilleur Casino En Ligne

- Casino En Ligne France Légal

- Casino En Ligne France Légal